GSA SER Verified Lists Vs Scraping

GSA SER Verified Lists vs Scraping: Understanding the Core Difference

Anyone running GSA Search Engine Ranker eventually faces the same critical decision: should you invest in pre-verified lists or build your own targets through scraping? The phrase "GSA SER verified lists vs scraping" captures a fundamental divide in how users approach link building efficiency. The choice impacts not just the volume of submitted links, but the quality, domain diversity, and ultimately the survival rate of your campaigns.

What Exactly Are GSA SER Verified Lists?

Verified lists are curated, pre-tested collections of target URLs. Sellers or community members run these URLs through GSA SER itself, often using multiple test projects, and filter out anything that fails to accept submissions, returns errors, or shows immediate signs of being banned. What remains is a cleaned database of platforms known to work—think thousands of blog comments, guestbooks, forums, and article directories where submission engines have a proven chance of placing a live link.

The main advantage is time. Instead of spending days or weeks scraping raw footprints, filtering dead domains and testing each engine, you import a list and GSA SER begins posting within minutes. These lists often come categorized by platform type, language, and even PageRank or domain authority tiers. For newcomers or those managing dozens of campaigns simultaneously, this plug-and-play nature is hard to beat.

The Scraping Approach: Building Your Own Target Lists

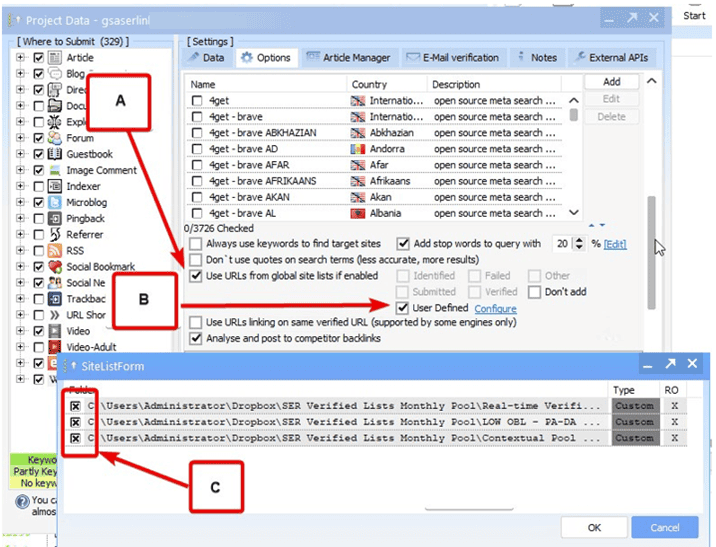

Scraping involves harvesting URLs straight from search engines using footprint strings. You configure GSA SER’s built-in search engine scraper or external tools to query Google, Bing, and other sources with phrases like “powered by WordPress†combined with “leave a reply.†The raw output is massive, unfiltered, and full of noise. You then rely on GSA SER’s internal processing—checking for duplicates, identifying engine types, testing captcha cost—to gradually whittle the list down into something usable.

The primary appeal here is freshness and ownership. A scraped list was never touched by any other GSA SER user. No footprints are shared, no domains overused by a thousand other link builders hitting the same targets. This can lead to higher acceptance rates on platforms that get sparsely submitted to, and less chance of your IP or project being flagged because the targets aren’t on a public blacklist.

Speed and Efficiency: Verified Lists Win the First Round

When evaluating "GSA SER verified lists vs scraping" purely on immediate campaign velocity, verified lists dominate. A good verified list can achieve a 40-70% successful submission rate right away, while a raw scraped list often hovers below 15% until extensively filtered. For tier 2 and tier 3 link building where volume matters more than purity, skipping the scraping step can save hours of CPU cycles and proxy bandwidth.

However, the initial speed comes with a hidden cost. Verified lists age rapidly. A list that performed brilliantly last week might see 30% of its URLs disappear—sites get deleted, antispam plugins update, engines ban certain footprints. You must constantly refresh your stock of verified lists, which means either paying for regular updates or joining active communities where fresh lists are traded.

Quality and Uniqueness: Where Scraping Pulls Ahead

Scraping lets you build lists tailored to highly specific niches. You can search for footprints related to a particular industry, language, or even a specific CMS version that others rarely target. The resulting list is unique and untouched. Since no one else is hammering these exact URLs simultaneously, the links you create face less immediate scrutiny from webmasters and spam filters.

There’s also the matter of spam score clustering. Verified lists, especially the popular ones, concentrate thousands of GSA SER users onto a relatively small pool of domains. Over time, those domains accumulate toxic links and either get deindexed or start applying nofollow and moderation more aggressively. Scraping your own targets circumvents this herd mentality, giving you a fresh playing field each time you harvest.

Cost Analysis: Time Is Money, But So Are Resources

Purchasing verified lists has an obvious monetary price. High-quality, regularly updated lists from reputable sellers aren’t free, and cheap ones often underperform. Scraping costs less in direct cash but demands significant system resources. High-quality proxies, efficient captcha-solving services, and a powerful server to run scraping tasks nonstop can add up. Plus, you need the technical know-how to avoid scraping garbage. If you’re already paying for tools like Scrapebox alongside GSA SER, the indirect costs of DIY scraping might match or exceed buying verified data.

For many experienced users, the sweet spot is a hybrid. They buy one solid verified list to kickstart a campaign and immediately begin scraping fresh targets in parallel. This way the engine always has work to do, but the foundation isn’t reliant entirely on the same worn-out domains everyone else is using.

Maintenance and Adaptability

Search engines constantly change their algorithms and footprint detection. A scraper must be updated to reflect what works today. Verified list sellers also have to adapt, but there’s an inherent lag. If Google deindexes a certain type of forum overnight, your pre-purchased list still contains those dead URLs until you clean it again. With scraping, you can react faster—adjust your footprints, switch to a different search engine, or focus only on platforms that survived the latest update.

On the flip side, scraping without understanding platform quality markers can fill your list with spam traps, honeypots, or simply useless pages that return an OK status but never actually publish a link. Verified lists, when created by experienced hands, pre-filter these junk targets. You’re paying for someone else’s hours of weeding out the 404s, 500s and cloaked redirects.

Security and Footprint Overlap

Every imported verified list leaves a footprint. If a list is widely circulated, the source URLs inside it can be cross-referenced to identify GSA SER usage patterns. Scraping, provided you use fresh proxies and vary your footprint combinations, leaves less of an orchestrated signature. Competitors can’t simply buy the same list and profile your entire backlink strategy. For sensitive money-site projects, this alone tilts the balance toward scraping, even with the extra effort involved.

Ultimately, the debate around "GSA SER verified lists vs scraping" boils down to a trade-off between convenience and control. No single method guarantees eternal success. Those who treat link building as a dynamic pipeline—validating sources constantly, blending pre-tested and freshly discovered targets, and monitoring LPM and verified totals daily—are the ones who outlast algorithm shifts and maintain consistent indexing rates. The tool itself doesn’t care where the URLs come from; it only rewards well-managed inputs.

GSA SER verified list service